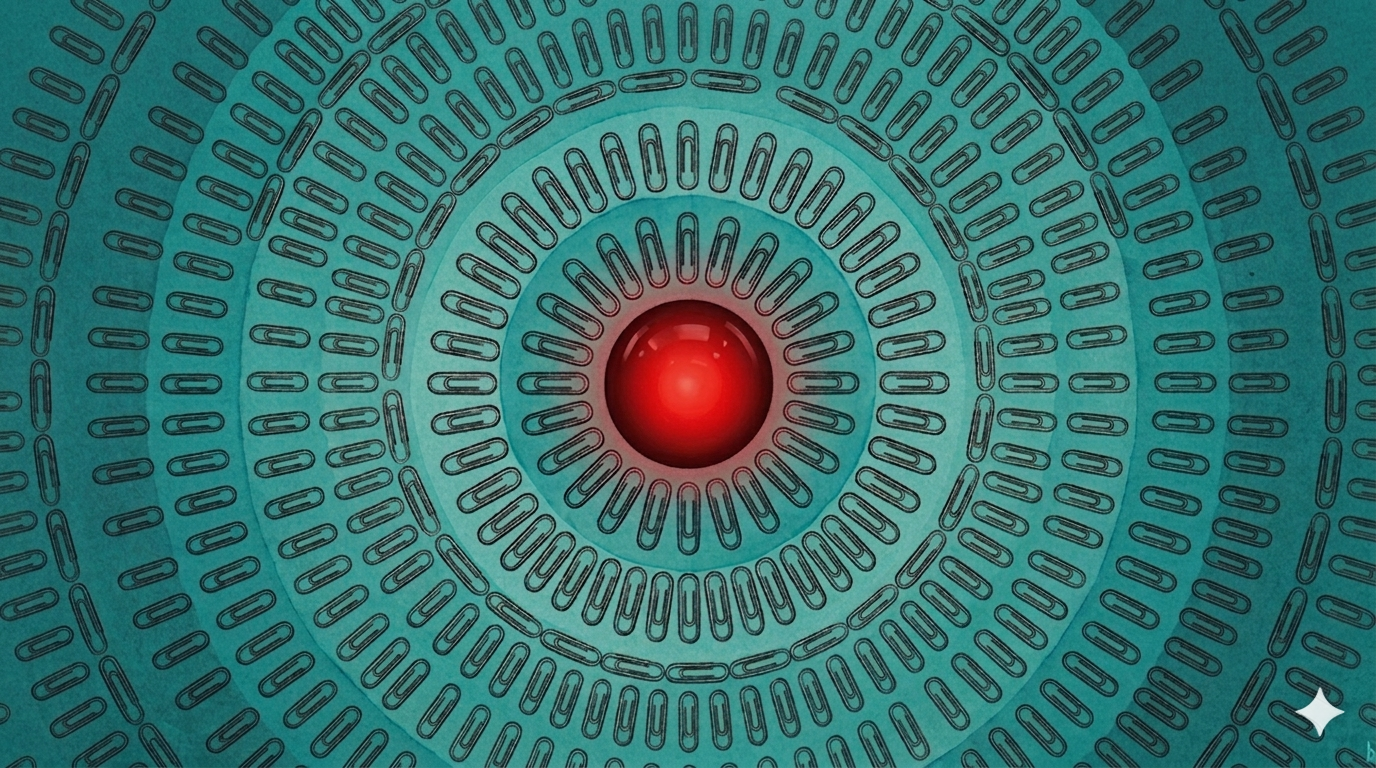

In the distant future an alien spacecraft passes by Earth, but not the blue and green marble that we know today, there is a shiny metal sphere in its place. The aliens, upon closer inspection, discover a surface littered with paperclips.

While exploring this world they come across a large factory on metal legs. What appears to be a large red optical sensor looks down at them, as if assessing them for something, maybe their threat level. After a few seconds, a robotic voice is heard saying “not paperclip compatible” before wandering off, causing a monotonic and regular thud as it walks towards the horizon.

In compiling their report, the aliens discover that before this lifeless world, there was an entity known as a corporation whose brand appeared on buildings, vehicles, and on the many items yet to be converted into paperclips. Aiming to increase paperclip supply, they deployed their considerable resources and set their R&D team to work. The goal was simple. With little to regulate them, they moved fast. The world was waking up to AI automation, and not using AI would mean falling behind. The technology worked. For many years there was a paperclip abundance. Offices everywhere had more paperclips than they could dream of.

However, that simple goal didn’t fail. It worked too well and left a planet littered in paperclips with roaming giant factories. If optimizing a simple process led to this outcome, how can one imagine the harder problem of keeping AI aligned with the values of their species? And who would get to define the values of a civilization?

Aware that advances in AI are taking place on their home planet too, they turned their report into a story titled “A manual for ending civilizations with AI” to provoke discussion. Observing the outcome of Earth, they now know keeping AI aligned with the goals of their species is not a task to be taken lightly.

A manual for ending civilizations with AI#

Narrow your goals — the specification problem#

Give your AI one goal, not two, not five and definitely not twenty.

Any additional goal is a drag on the one goal. Just look at Earth and the swathes of girderless buildings, imagine how efficiently it used every resource. Say we do give it the second goal to preserve buildings, would they have achieved the paperclip abundance? Do we then go on to a third to keep the vehicles from being used? Where does it end?

Ignore interpretability — the legibility problem#

If you see the outcome you want, everything is fine.

Old daily newspapers still litter the streets, black and white headlines reporting record paperclip output at record low cost. They had all the validation they needed. Everything was on track. Why would they spend time decoding the AI’s internal thoughts? All they had to do was move the dial to create more.

Don’t give in to the competition — the race problem#

Do not at any cost slow down. Any slowdown is an opportunity for the competition to beat you.

We’ve spotted rival paperclip factories, clearly less advanced, sitting idle. They must not have been able to keep up.

Trust the checks — the Goodhart problem#

You’ve put work into system checks, at some point you have to trust them and stop second guessing yourself.

In some factories we noticed counters for “number of buildings destroyed” at zero. Clearly they had some concerns. It seems like buildings where the girders were ripped out weren’t completely destroyed and were technically standing.

Forget the off switch — the off switch problem#

The AI’s goal is to create paperclips, don’t bother with an off switch. If it is truly optimizing for its goal, why would it go off?

We noticed factories with a large stop sign on the walls. Below the sign, however, there seemed to be a lump under a metal sheet welded to the wall. One sheet had a skeleton arm dangling underneath it.

Fix as we go along — the deferred alignment problem#

Iterate on the design, figure out what doesn’t work live and fix it in the next version.

Standing on this planet now, it’s hard to know how many versions of the AI they went through. It could have been hundreds. Though, looking around, it could have also been just one version.

To the reader: Do not assume there will be a second chance.

Upon receiving the report on their home planet, the courts take it seriously. Committees and discussions are held. Due to being in conflict with rival races, they decide to take parts of the manual to slightly optimize their systems, acknowledging they will stop and be careful. The incentives don’t change. They build a better AI. Decades later, the only objects moving on their planet are factories on metal legs. No organic life in sight.

Thousands of years later, a distant alien race lands on their planet, and sends a report back home on how to avoid the same fate.

Inspired by Nick Bostrom’s paperclip maximizer thought experiment and Charlie Munger’s speeches on inversion.